Is Data Science getting popular?

About

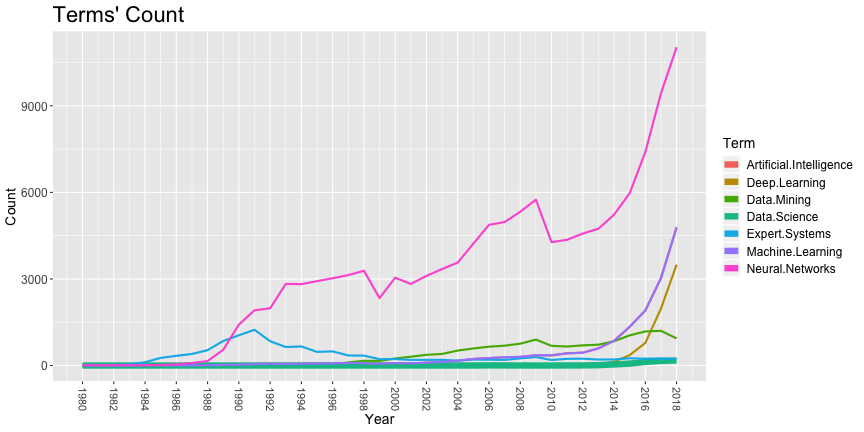

Let's see if Data Science is getting more popular through a simple and lazy experiment: querying Clarivate's Web of Science for some terms, and counting the occurrence of those terms in titles of papers for each year it appears.

The Data

Some results were extracted from Clarivate's Web of Science beforehand to facilitate processing. From its main portal (www.webofknowledge.com) we did some searches for specific terms of interest, clicked on "analyze results", selected "Publication Years" and used 25 results then "Download data rows displayed in table". A sample file with downloaded data is shown below.

Publication Years records % of 653 2019 63 9.648 2018 176 26.953 2017 160 24.502 2016 123 18.836 2015 65 9.954 2014 36 5.513 2013 12 1.838 2012 3 0.459 2011 2 0.306 2008 1 0.153 2007 2 0.306 2006 4 0.613 2002 1 0.153 2001 2 0.306 2000 1 0.153 1997 2 0.306 (0 Publication Years value(s) outside display options.) (0 records (0.000%) do not contain data in the field being analyzed.)

We created one file for the terms "Artificial Intelligence", "Deep Learning", "Data Mining", "Data Science", "Expert System*", "Machine Learning", and "Neural Network*". The file names (with links to it) are, respectively, WoS_AI.txt, WoS_DL.txt, WoS_DM.txt, WoS_DS.txt, WoS_ES.txt, WoS_ML.txt, and WoS_NN.txt.

Reading the Data into a Data Frame

Before executing any code, let's load the libraries:

library(data.table) library(ggplot2)

Our first step is to read that data into a data frame. It won't be straightforward since the format is pure text, without indication of which lines are data, headers or comments. Worse, there are lines at the end of the file that are not data and must be skipped before parsing the other lines.

One way to do that is to read all lines into a vector, removing unwanted lines from that vector and then reading data from that vector:

# Read all lines into a vector: rawData <- readLines("Data/WoS/WoS_DS.txt") # Remove first line of that vector: rawData <- rawData[-1] # Remove last two lines of that vector: rawData <- head(rawData,-2) # Use it to create a data frame: DSData <- read.table(textConnection(rawData),sep = "", col.names = c("Year","Counts","Percent")) DSData

## Year Counts Percent ## 1 2019 63 9.648 ## 2 2018 176 26.953 ## 3 2017 160 24.502 ## 4 2016 123 18.836 ## 5 2015 65 9.954 ## 6 2014 36 5.513 ## 7 2013 12 1.838 ## 8 2012 3 0.459 ## 9 2011 2 0.306 ## 10 2008 1 0.153 ## 11 2007 2 0.306 ## 12 2006 4 0.613 ## 13 2002 1 0.153 ## 14 2001 2 0.306 ## 15 2000 1 0.153 ## 16 1997 2 0.306

OK, that work. We should create a function that gets a file name as parameter and return the data frame.

While we're at it, let's remove the "Percent" column, since we won't use it later. Let's also use a different label for the "Counts"

column -- that will make merging data frames simpler later.

Here it is:

readFile <- function(file,colName) { rawData <- readLines(file) rawData <- rawData[-1] rawData <- head(rawData,-2) data <- read.table(textConnection(rawData),sep = "", col.names = c("Year",colName,"Percent")) data$Percent <- NULL return(data) }

Processing the Data

Let's read data for all queries we did:

AIData <- readFile("Data/WoS/WoS_AI.txt","Artificial Intelligence") DLData <- readFile("Data/WoS/WoS_DL.txt","Deep Learning") DMData <- readFile("Data/WoS/WoS_DM.txt","Data Mining") DSData <- readFile("Data/WoS/WoS_DS.txt","Data Science") ESData <- readFile("Data/WoS/WoS_ES.txt","Expert Systems") MLData <- readFile("Data/WoS/WoS_ML.txt","Machine Learning") NNData <- readFile("Data/WoS/WoS_NN.txt","Neural Networks")

We need to merge all these dataframes together. See Simultaneously merge multiple data.frames in a list for some ways to do that.

allData <- Reduce(function(dtf1, dtf2) merge(dtf1,dtf2,by="Year",all=TRUE), list(AIData,DLData,DMData,DSData,ESData,MLData,NNData)) head(allData)

## Year Artificial.Intelligence Deep.Learning Data.Mining Data.Science ## 1 1956 NA NA NA NA ## 2 1959 2 NA NA NA ## 3 1960 NA NA NA NA ## 4 1962 1 NA NA NA ## 5 1963 1 NA NA NA ## 6 1964 2 NA NA NA ## Expert.Systems Machine.Learning Neural.Networks ## 1 NA NA 1 ## 2 NA 2 NA ## 3 NA NA 1 ## 4 NA 1 3 ## 5 NA 1 1 ## 6 NA 2 NA

This is a bit messy -- there are a lot of NAs caused by the merging of the dataframes. Let's fix this (here's how: How do I replace NA values with zeros in an R dataframe?):

allData[is.na(allData)] <- 0 str(allData)

## 'data.frame': 61 obs. of 8 variables: ## $ Year : int 1956 1959 1960 1962 1963 1964 1965 1966 1967 1968 ... ## $ Artificial.Intelligence: num 0 2 0 1 1 2 0 1 3 2 ... ## $ Deep.Learning : num 0 0 0 0 0 0 0 0 0 0 ... ## $ Data.Mining : num 0 0 0 0 0 0 0 0 0 0 ... ## $ Data.Science : num 0 0 0 0 0 0 0 0 0 0 ... ## $ Expert.Systems : num 0 0 0 0 0 0 0 0 0 0 ... ## $ Machine.Learning : num 0 2 0 1 1 2 0 1 3 2 ... ## $ Neural.Networks : num 1 0 1 3 1 0 3 16 3 2 ...

Let's ignore results before 1980. Let's also remove data for 2019 since it is incomplete.

allData <- allData[allData$Year >= 1980, ] allData <- allData[allData$Year < 2019, ]

We're ready to plot the data. Plotting multiple time series in ggplot2 requires some melting of the data so we have one line for the year, one for the term and one for the count of terms for that year. We will add another column that defines the thickness of the line, so we can use different line styles for some terms.

# Melt the data in the proper format, with specific column names. melted <- melt(allData,id="Year") colnames(melted) <- c("Year","Term","Count") # Set the thickness depending on the term. melted$thickness <- 1 melted$thickness[melted$Term=="Data.Science"] <- 3 # Plot it with style! ggplot(melted,aes(x=Year,y=Count,colour=Term,group=Term,size=thickness)) + geom_line()+ scale_size(range = c(1,3), guide="none")+ scale_x_continuous("Year",breaks=seq(1980,2018,2))+ guides(colour = guide_legend(override.aes = list(size=3)))+ ggtitle("Terms' Count")+ theme(axis.title=element_text(size=14), axis.text.x=element_text(size=11,angle=-90,vjust=0.5,hjust=1), axis.text.y=element_text(size=12), legend.title=element_text(size=14), legend.text=element_text(size=12), plot.title=element_text(size=22))

Warning: Code and results presented on this document are for reference use only. Code was written to be clear, not efficient. There are several ways to achieve the results, not all were considered.

See the R source code for this notebook.